HI Guys,

Today, let’s study the Navie Bayes. Bayes formula is well known to all of us, but the way to apply it to classification may be puzzling you. Here’s a very brief tutorial about it from Andrew Ng, which I high recommend you to take a look at (only 10 mins!)

1. Backgound of different type of Bayes classifier, which mainly different from distribution of  .

.

2. For MLlib, it supports multinomial naive Bayes and Bernoulli naive Bayes. They are typically used for document classification.

3. One common sense. “Naive” of Naive Bayes stands for considering every pairs of features are independent.

Multinomial naive Bayes: In context, each observation is a document and each feature represents a term whose value is the frequency of the term

Bernoulli naive Bayes: a zero or one indicating whether the term was found in the document

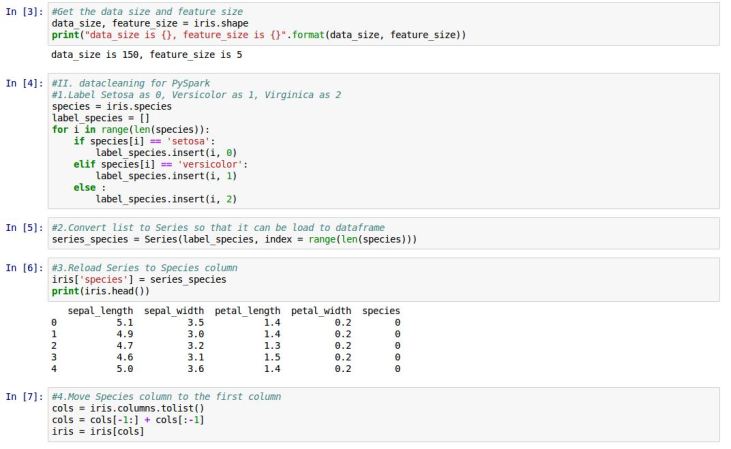

Let’s look at IPython code. Github repo is at here.

We will still use the classic Iris dataset here with all three categories.

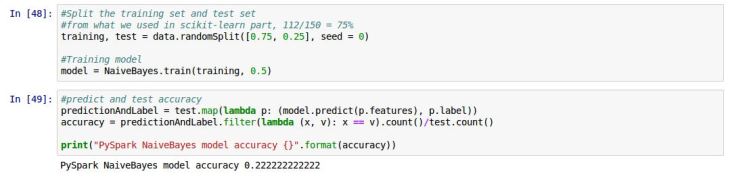

Next let’s use MLlib model

We can see the accuracy by MLlib Naive Bayes model is similar to the one by Bernouli Bayes in scikit-learn packages. While, the Multinomial one gets better accuray. Mostly because the Iris dataset features are not integers. If we changed them to integers, the accuracy would become better.

Reference,

1. https://spark.apache.org/docs/latest/mllib-naive-bayes.html

2. http://matplotlib.org/api/pyplot_api.html?highlight=scatter#matplotlib.pyplot.scatter

3. http://scikit-learn.org/stable/modules/naive_bayes.html

Thanks for a great post!

Can you please share the link of the sample data set used in this exercise so that we may practice?

Thanks in advance.

LikeLike

Sure, it’s at https://github.com/mwaskom/seaborn-data/blob/master/iris.csv

LikeLike